|

This semester I'm working with the Business & Admin Writing class here at UCCS. One of the things that I think is really cool about this class is that the professor emphasizes Google as a research tool. His thinking is that once his students leave UCCS they won't have access to our library resources so they should get the skills to use the tools they're actually going to use on the job.

And that tool is Google.

0 Comments

Earlier this summer, I piloted an activity that asked participants to evaluate a collection of sources to decide which ones they would pick to build an argument. I called it Know Your Sources and you can read about it here. TL;DR: I wasn't really happy with it. The concept was good, but the execution left a lot to be desired. It depended on a single volunteer from students in a synchronous online environment (and in its first iteration that volunteer ended up being the professor) and Google Forms wasn't a good delivery system. Good news: I made it better! This class (TCID 2080) is a first-year writing course but the emphasis is on Business and Technical writing rather than academic writing. So for KYS 2.0, I picked a single example that would be timely and similar to the work the students would be doing over the course of the semester. This helped streamline the activity IMMENSELY. I'm not opposed to having multiple options in the future, but I maybe should have limited the scope of KYS 1.0.

Padlet, however, was the real innovation. Not only was it easier to see all the items on the page but also everyone could participate. Instead of asking one person to talk through their thought process, I dropped the link in the Microsoft Teams chat and asked the students to give the sources a star rating from 1-5. I wish the padlet would have sorted itself by that star rating -- that would have made it a lot easier to recap after the activity -- but that's more of a quibble than an issue. After the students had rated the sources, I asked the following questions:

What Worked: PADLET. This is exactly the vehicle for this activity that I was looking for. What Didn't: We didn't get into types of sources as much as we did during the discussion period of KYS 1.0, but I think that's partially because of the topic and class context. What I'd Change: If I can figure out how to get Padlet to sort the columns by star rating, that would make the discussion portion that much further. I would also probably write down my questions in advance more clearly to guide the discussion section, One of the first classes I taught for this Fall Semester was "American Literature 1820-1900 Print Cultures" which I was HYPE about because of my MA background in print culture. For this class, there had been a worksheet that the students had typically filled out in class after the librarian had demoed the relevant databases. And it's a good worksheet. But the synchronous online class is not a good environment for a worksheet that's designed to be filled out individually at the end of a class. So I made something new! The activity I put together for this class was based on an assignment I did in my intro to librarianship course. When we did it, the idea was that we would be practising evaluating databases for purchase. I stripped out a lot of the library school kinds of questions (and I mean a lot it was originally 7 pages) and put together a worksheet geared more toward exploration than evaluation. I put the students into two groups on Microsoft Teams (not an elegant process, but thanks to these instructions I figured it out) and assigned one group the American Periodicals Series and the other American Antiquarian Society Historical Periodicals. On the worksheet I linked to, you'll also see HathiTrust, which I demoed for them after we'd shared out. From the answers the group came up with, we wrote a cheat sheet that highlighted the differences, similarities, and things to watch out for in each database.

This last part was inspired by a breakout session during the ARLIS/NA 2020 virtual conference. I was in a breakout group in the "Reimagining the Frame" Session where my group imagined an activity based on "Research as Inquiry" where upper-level undergrads in art history or visual arts explored various relevant sources of images and put together a cheat sheet as a class after their exploration. I loved this idea and I was really excited to put it into practice. What Worked: The students all were able to find answers to all the questions and participated during the share out. I think we were able to learn actively together even on Microsoft Teams and that in and of itself is a success. The two sessions hit about 80% of the answers I wanted them to, and that feels really good. I budgeted 20 minutes for the groups to work together and 10 minutes to share out, and that was more or less the right amount of time for each part of the activity. What Didn't Work: In a lesson in disciplinary jargon, it didn't occur to me to define all the terms I was using. We ran into some "what the hell is truncation?" What I'll Change Next Time: In the second class, I used the MLA Bibliography database (which the prof had asked me to demonstrate a little so they knew where to find secondary sources) to define my terms more clearly and that largely solved the disciplinary jargon issue. All in all this was successful though and I'll definitely use the activity again!

Something that I've noticed from working with first year writing and rhetoric classes is that research questions are conceptually something that people want more clarity on, but they don't really know how to ask that question. The instructor I worked with this summer explicitly said he wanted me to cover research questions in my last session in class. While he didn't give too many specifics, just that he wanted me to cover research questions, based on experience in reference/research consultations the issue of "what is a good research question?" was one I knew I wanted to cover.

I designed this activity to go after I had talked about the elements of a good research question and had modeled strengthening two mediocre examples. For an in person session, I probably would have used a paper handout (especially as this is modeled after a colleague's activity), but this lent itself really well to the online setting. I got four students to identify which of the three options for each example they thought would be the best version of a research question investigating the prompt topic. I was gratified to see that for our third session together they needed very little prompting to talk through their thought process as they evaluated the questions. What Worked: The number of examples worked well. I had initially planned for there to be five, but I couldn't come up with a fifth relevant example topic so it ended up being four. That worked out though; I feel like it was enough repetition to drive the point home but not so much that it got tedious. The Google Form also worked well for this one; I didn't run into the scrolling up and down issue that I had with previous activities for this class. I made the form a "quiz" so that students looking at the form asynchronously could get feedback on their choices which worked well for this activity because I envisioned there being a right answer (unlike the sources deck building game). What Didn't: Initially getting people to jump in was a little tough because while screensharing I couldn't see the chat or people raising hands, but that's more an issue with screensharing in Teams than anything else. What I'd Change: Honestly this worked exactly as I expected it to which was refreshing. Obviously I would tailor the examples to different first year writing and rhetoric class themes if I were to reuse the tool, but I would do that with any tool. It's great when things go exactly according to plan. I have an image that I like to use when I have in-person classes to show the ways to come up with a research topic, but for the online environemnt I wanted to make it more dynamic. Initially, when I was putting it together I wanted to make it a bit more choose your own adventure themed, but to be totally honest I ran out of time before the class. I was able to make the spreadsheet more responsive than a static image would have been, but I'm not altogether happy with how it came out.

What Works: The flow is good. I like the two branch structure and I stand by the text of the tool. What Doesn't: The formatting gets real wonky when too much text is added. I also think it's too long in terms of its dimensions. What I'd Change: I think there's potential for Sheets to support a more dynamic research topic/question flow chart, but I'd have to do some work to figure out how that would work.

My teaching philosophy tends to be grounded very much in doing and playing around with things. I think this is really important, especially for information literacy, because a lot of what we teach in library instruction sessions are skils not facts. And now that we're all online because of the global novel coronavirus pandemic, active learning tools that support these goals have to look different.

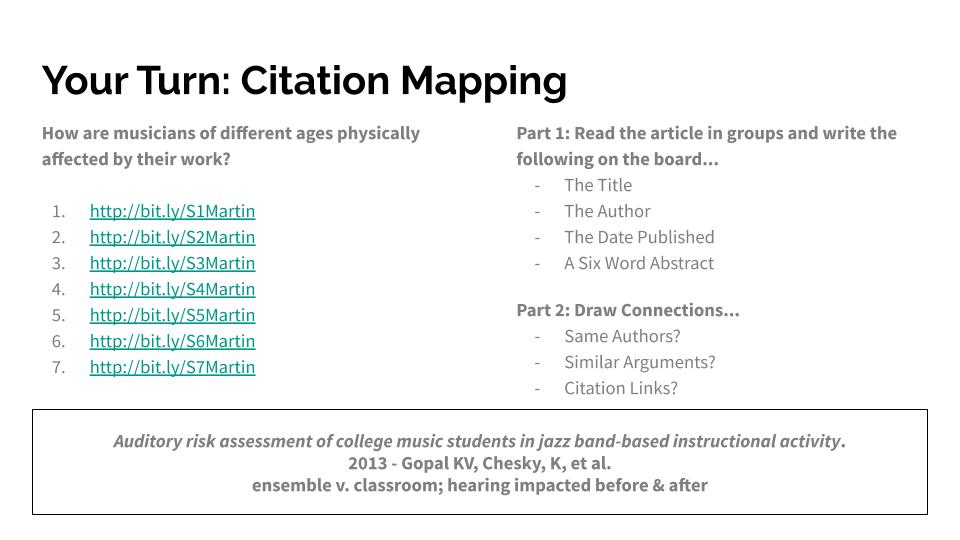

Of the learning games I've been exposed to, I really like Walsh and Williamson's SOURCES because I'm positively tickled by thinking about collecting sources for a bibliography as a deck building game. Naturally, handing cards out wouldn't be a super save pedagogical choice even if we could be in the same room and it's downright impossible over Microsoft Teams. So, I took the underlying structure of their game and made an activity that kept the instruction goals the same but was in a format that was friendlier to my current circumstances. You're welcome to click through the game yourself, but the core idea is that you pick a topic on the first page and then you're brought to a collection of 10-ish sources from which you pick some number that satisfy the "assignment" requirements and build toward a coherent argument. When you hit submit, you're taken to a discussion page where I describe what choices I would have made with the sources in front of me. The class I made this for was partially synchronous, partially asynchronous (students could choose what path), so I had to think about people in the Teams meeting with me on the day I was set to teach and people who would be watching the session later. What Worked: The collection of sources I put together for each prompt worked really well and offered different paths to a bibliography depending on how the player wanted to put their argument together. The students in class the day of said it was a really helpful way to think about what role different kinds of sources (scholarly, popular, etc) played in constructing an argument. And they thought it was fun which is a huge bonus because I wasn't sure how engaging it would come across on Teams. What Didn't Work: I had a little trouble getting a student to volunteer (this was the first of three classes I was embedded in for this course) so their professor ended up being my volunteer, but that did lead to a good conversation between the two of us about what he was choosing and he was a real sport about talking through his process for the students. The google form format wasn't ideal because participants had to scroll up and down between the "source cards" and the selection options. Collecting the sources was pretty labor intensive. What I Want to Change in the Future: I might not use google forms for this game. The scrolling up and down is intrinsically a significant source of cognitive load, and I'd like to minimise that so that players can focus more on learning the skill and not navigating the format. I'm not sure what a good alternative would be, and the form was fine as a proof of concept, but I definitely want to make the underlying structure less distracting. One of the things I'm glad of in the UCCS library classrooms is the whiteboard wall. While it occasionally bothers me when an arrow I've drawn on one slide that then gets in the way of the next one, but the sheer amount of whiteboard space is great for getting students involved in library instruction. In particular, the whiteboard wall really works for teaching citation mapping (or ancestry searching or citation chaining...). One way I've seen this done in the past is to work it into the Google Form library worksheet, but one of my colleagues here does something different that I like a lot. She hands out slips of paper with bitlys to various articles and has students work in groups to skim the article and put up a few pieces of key information on the board. She then has them literally draw connections between the articles to show how they live in conversation. This requires a lot of prep work, but it leads to some great discussions about how scholarly conversations evolve. In one of my first solo ENGL 1410 (think freshman writing), I opted to rework this slightly to eliminate the slips of paper. The class had a sound studies focus, so I chose articles about the impact of noise on the body: What Worked:

Students got really excited about showing which articles dealt with similar topics and which dealt with very different topics. They started to think about how they might group them in an argument, which wasn't on my agenda but which was great conversation. What Didn't: The class had a bit of trouble seeing the links between who cited whom in our array of articles. What I Want to Do Differently Next Time: I might cut down on the number of articles used. I definitely want two examples by the same author, one example that's cited by more than one other example, and one example that is cited by another and cites another. I also want to have the students tell the class their article title, author, and publication date before putting them up on the board. I think that will address some of the issues around seeing who cites whom and organizing the articles by date to spark conversation about currency. One of the things I've struggled with doing well is the database demonstration. It's easy to do a rote example and forget the role that sparking interest plays in learning retention when you're tasked with doing a demo. Over the past quarter, I've had the opportunity to do three database demonstrations and I think in each case I've learned something new about how to maintain engagement both for me and for my audience.

Demonstrating Failure. For a Gender, Women, and Sexuality Studies class in January, I was asked to go through two of the guides in GWSS and to talk about searching finding aids. For this session, I opted not to prep a specific example but instead to feel out a search based on what the room talked about before my demo. Prior to the session, I had taken time to experiment in a couple different ways with searching so that I could respond well to whatever prompt got thrown my way. In the one-shot, we ended up stumbling on a search with very limited results. This was facially frustrating because, of course, I wanted to be able to show the depth of our collection, but for me it's important to remember that more often than not that's what the research process looks like. You usually get limited returns and have to figure out either how to find better results or why certain voices are missing. Unfortunately, due to time constraints, I didn't really get a chance to go too deep into that issue, but it's a healthy reminder that "failure" is ok in front of an audience. Demonstrating Place. I also had the opportunity to demonstrate Readex's Congressional Serial Set database with a slight twist. Both of the times I got to roll through the different ways to engage the database I was presenting to other librarians. Without the frame of a class assignment, I made a three-point plan to get through a couple of the ways to engage with the database. I started with a citation search. We get a lot of reference questions, especially on chat, that look like "where do I find xyz thing the citation looks like this: ____", so this was something that the audience would need to know at some point. After that, I demonstrated advanced search by showing how to find maps and images of Mt. Rainier National Park and how to use the browse function by looking at the treaties signed by the territorial government with the tribes whose land the UW sits on. Working in a land acknowledgment to the database demonstration was about accomplishing both inclusion and justice priorities but also about making government documents immediately relevant. Looking at the browse search function this way also allowed me to gesture to what was missing and to the fact that the language we use today is not always the language used by primary sources. The Jackson School Task Forces are the Capstone project for UW International Relations Students. After setting up the general structure of the LibGuides for all of the Task Force sections, I joined Jessica Albano on Task Force J. In the second week of the quarter, the task forces come to the library for a session on concept maping and library resources to start them off on their research process.

The concept mapping part of the session went really well. After breaking up the group of 14 into three groups there was some slowness to start getting things up on the board, but once things got going they went really well. The share out also went really well. They came up with a lot of good relationships between concepts and some good starting keywords for further searching. What we wish had gone better was the division of the students into groups. Some task forces divide the students prior to their library instruction session, but this instructor had not chosen to do that. Since the concept mapping portion of the session was my responsibility, in the future I would ask the students for three over arching themes that have emerged in their research and let them cluster around those ideas rather than dividing them artificially. They expressed in the share out that the three themes we used to divide them felt artificial, so I would want to mitigate that in the future. Overall, it was a successful session despite some issues beyond my control and the rocky start that I unwittingly imposed. On April 9, I got to observe a teaching session run by another librarian directed at students in UW's GEOG 315 class. Per the catalog description, GEOG 315: "Covers the beginning steps in the research process... Students develop basic library and writing skills as preparation for future research methods classes and independent research." The instruction session asked the students to share their topics and then covered potential geography research challenges and useful resources for their upcoming lit review and annotated bibliography assignments.

One of the mainstays of library instruction is the worksheet, and I was particularly interested in the way this librarian structured that part of the session. Instead of printing out a sheet of paper for students to fill in, they used a google form. I liked this for a number of reasons. One was that it saves on paper that students may or may not keep. Another was that it sent their answers to the section TAs and to the librarian so that they could return to that document later and push useful, specific resources to the students. Of course, it's a little harder for the students to keep their answers, and since the form walked them through how to annotate a source for an annotated bibliography having that example to look back on would be crucial. I talked to the librarian later and they said this was the first time they had done this kind of a thing that this was something that they would want to fix next time. All in all, I really liked this approach and plan to use it in the future. It's a really effective way to update a really useful teaching strategy. |

Interested in any of these? Use the Contact tab to be in touch!

You can also view the current state of these activities on my instruction menu: Categories

All

Archives

October 2022

|

RSS Feed

RSS Feed